OpenVZ virtualization

OpenVZ is an operating system-level virtualization technology based on the Linux kernel and operating system. OpenVZ allows a physical server to run multiple isolated operating system instances, known as Virtual Private Servers (VPS) or Virtual Environments (VE).

As compared to virtual machines such as VMware and paravirtualization technologies like Xen, OpenVZ offers the least flexibility in the choice of operating system: both the guest and host OSes must be Linux (although Linux distributions can be different in different VEs). However, OpenVZ's operating system-level virtualization provides better performance, scalability, density, dynamic resource management, and ease of administration than the alternatives. According to its website, there is only a 1-3% performance penalty for OpenVZ as compared to using standalone servers.

OpenVZ is licensed under the GPL version 2.

OpenVZ consists of the kernel and the user-level tools.

İçindekiler

Kernel

OpenVZ kernel is modified Linux kernel, which adds a notion of Virtual Environment. Kernel provides virtualization, isolation, resource management, and checkpointing. OpenVZ project supports different mainstream kernels and SUSE10 kernel.

Virtualization and Isolation

Each VE is a separate entity, and from the point of view of its owner it looks like a real physical server. So each has its own

- Files

- System libraries, applications, virtualized /proc and /sys, virtualized locks etc.

- Users and groups

- Each VE has its own root users, as well as other users and groups.

- Process tree

- VE only sees its own processes (starting from init). PIDs are virtualized, so that the init PID is 1 as it should be.

- Network

- Virtual network device, which allows a VE to have its own IP addresses, as well as a set of netfilter (iptables) and routing rules.

- Devices

- If needed, any VE can be granted access to real devices like network interfaces, serial ports, disk partitions, etc.

- IPC objects

- Shared memory, semaphores, messages

Et cetera et cetera.

Resource management

As all the VEs are using the same kernel, resource management is of paramount importance. Really, each VE should stay within its boundaries and not affect other VEs in any way -- and this is what resource management does.

OpenVZ resource management consists of three components: two-level disk quota, fair CPU scheduler, and user beancounters. Please note that all those resources can be changed during VE runtime, there is no need to reboot. Say, if you want to give your VE less memory, you just change the appropriate parameters on the fly. This is either very hard to do or not possible at all with other virtualization approaches such as VM or hypervisor.

Two-Level Disk Quota

Host system (OpenVZ) owner (root) can set up a per-VE disk quotas, in terms of disk blocks and i-nodes (routhly number of files). This is the first level of disk quota. In addition to that, a VE owner (root) can use usual quota tools inside own VE to set standard UNIX per-user and per-group disk quotas.

If you want to give your VE more disk space, you just increate its disk quota. No need to resize disk partitions etc.

Fair CPU scheduler

CPU scheduler in OpenVZ is also two-level. On the first level scheduler decides which VE is give the CPU time slice to, based on per-VE cpuunits values. On the second level the standard Linux scheduler decides which process to run in that VE, using standard Linux process priorities and such.

OpenVZ administrator can set up different values of cpuunits for different VEs, and the CPU time will be given to those proportionally.

Also there is a way to limit CPU time, e.g. say that this VE is limited to, say, 10% of CPU time available.

User Beancounters

User Beancounters is a set of per-VE counters, limits, and guarantees. There is a set of about 20 parameters which are carefully chosen to cover all the aspects of VE operation, so no single VE can abuse any resource which is limited for the whole node and thus do harm to another VEs.

Resources accounted and controlled are mainly memory and various in-kernel objects such as IPC shared memory segments, network buffers etc. etc. Each resource can be seen from /proc/user_beancounters and has five values assiciated with it: current usage, maximum usage (for the lifetime of a VE), barrier, limit, and fail counter. The meaning of barrier and limit is parameter-dependant; in short, those can be thought of as a soft limit and a hard limit. If any resource hits the limit, fail counter for it is increased, so VE owner can see if something bad is happening by analyzing the output of /proc/user_beancounters in her VE.

Checkpointing and Live Migration

A live migration and checkpointing feature was released for OpenVZ in the middle of April 2006. It allows to migrate a VE from one physical server to another without a need to shutdown/restart a VE. The process is known as checkpointing: a VE is freezed and its whole state is saved to the file on disk. This file can then be transferred to another machine and a VE can be unfreezed (restored) there. The delay is about a few seconds, and it is not a downtime, just a delay.

Since every piece of VE state, including opened network connections, is saved, from the user's perspective it looks like a delay in response: say, one database transaction takes a longer time than usual, when it continues as normal and user doesn't notice that his database is already running on the another machine.

That feature makes possible scenarios such as upgrading your server without any need to reboot it: if your database needs more memory or CPU resources, you just buy a newer better server and live migrate your VE to it, then increase its limits. If you want to add more RAM to your server, you migrate all VEs to another one, shut it down, add memory, start it again and migrate all VEs back.

User-level tools

OpenVZ comes with the command-line tools to manage VEs (vzctl), as well as tools to manage software in VEs (vzpkg).

vzctl

This is a simple high-level command-line tool to manage a VE.

- vzctl create VEID [--ostemplate <name>] [--config <name>]

- This command will create a new Virtual Environment with numeric ID of VEID, which will be based on a specified OS template (a Linux distro) and having resourse management parameters taken from a specified config sample. Both --ostemplate and --config parameters are optional, defaults for them are given in a global configuration file.

- vzctl start VEID

- Starts a given VE. Start means creating a Virtual Environment context within the kernel, setting all the resource management parameters and running VE's /sbin/init in that context.

- vzctl stop VEID

- Stops a given VE. A VE can also be stopped (or rebooted) by its owner using standard /sbin/halt or /sbin/reboot commands.

- vzctl exec VEID <command>

- Execute a command inside a gived VE. Say, to see a list of processes inside VE 102, use vzctl exec 102 ps ax.

- vzctl enter VEID

- Open a VE shell. This is useful if, say, sshd is dead for this VE and you want to troubleshoot the case.

- vzctl set VEID --parameter <value> [...] [--save]

- Set a parameter for VE. There are a lot of different parameters. Say, to add an IP address to a VE, use vzctl set VEID --ipadd x.x.x.x --save. To set VE disk quota, use vzctl set VEID --diskspace soft:hard --save. To set VE kernel memory barrier and limit, use vzctl set VEID --kmemsize barrier:limit --save.

Templates and vzpkg

Templates are precreated images to be used to create a new VE. Basically, a template is a set of packages, and a template cache is a tarball of a chroot environment with those packages installed. During vzctl create stage, a tarball is unpacked. Using a template cache technique, a new VE can be created in secords.

vzpkg tools is a set of tools to facilitate in template cache creation. It currently supports rpm and yum-based repositories. So, basically, to create a template of, say, Fedora Core 5 distribution, you need to specify a set of [yum] repositories which have FC5 packages, and a set of packages to be installed. In addition, pre- and post-install scripts can be employed to further optimize/modify a template cache. All the above data (repositories, lists of packages, scripts, GPG keys etc.) form a *template metadata*. Having a template metadata, template cache can be created automatically; you just run vzpkgcache utility. vzpkgcache will download and install the listed packages into a temporary VE, and pack the result as a template cache.

Template caches for non-RPM distros can be created as well, although this is more a manual process. For example, this HOWTO gives detailed instructions of how to create a Debian template cache.

The following template caches (a.k.a. precreated templates) are currently (as of May 2006) available:

- Fedora Core 3

- Fedora Core 4

- Fedora Core 5

- CentOS 4 (4.3)

- Gentoo 2006.0 (20060317)

- openSUSE 10

OpenVZ distinct features

Scalability

As OpenVZ employs a single kernel model, it is as scalable as well as the Linux kernel 2.6, meaning it supports up to 64 CPUs and up to 64 GB of RAM. A single virtual environment can be scaled up to the whole physical box, i.e. use all the CPUs and all the RAM.

Indeed, some people are using OpenVZ with a single Virtual Environment. This is strange from the first sight, but given the fact that a single VE can use all of the hardware resources with native performance, and you have added benefits such as hardware independence, resource management and live migration, this is obvious choice in many scenarios.

Density

OpenVZ is able to host hundreds of Virtual Environments on a decent hardware (the main limitations are RAM and CPU).

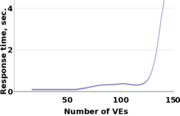

The graph shows relation of VE's Apache web server response time on the number of VEs. Measurements were done on a machine with 768 Mb (3/4 GB) of RAM; each VE was running usual set of processes: init, syslogd, crond, syslogd, sshd and apache. Apache daemons were serving static pages, which were fetched by http_load, and the first response time was measured. As you can see, then number of VE grows, response time becomes higher because of RAM shortage and excessive swapping.

In this scenario it is possible to run up to 120 such VEs on a 3/4 Gb of RAM. It extrapolates in a linear fashion, so it is possible to run up to about 320 such VEs on a box with 2 Gb of RAM.

Mass-management

An owner (root) of OpenVZ physical server (also known as Hardware Node) can see all the VE processes and files. That makes mass management scenarios possible. Consider that VMware or Xen is used for server consolidation: in order to apply a security update to your 10 virtual servers you have to log in into each one and run an update procedure -- the same you would do with the ten real physical servers.

In OpenVZ case, you can run a simple shell script which will update all (or just some selected) VEs at once.

Usage scenarios

The following usage scenarios are common for all virtualization technologies. However, a unique feature of os-level virtualization like OpenVZ is you do not have to pay big virtualization overhead, that makes scenarios more appealing.

- Security

- You put each network service (like Apache, mail server, DNS server etc.) into a separate Virtual Environment. In case hacker finds a security hole in one of the applications and gets into, all he can abuse is this very service; since all the other services are in separate VEs he can not access it.

- Server Consolidation

- Currently, most servers are underutilized. Using OpenVZ, such servers can be consolidated by migrating them into Virtual Environments. Savings are in rack space, electricity bills, and management efforts.

- Hosting

- Apparently, OS-level virtualization is the only type of virtualization which hosters can afford and use to sell cheap (like $15 a month) VEs to their customers. Note that each VE has full root access, meaning VE owner can reinstall anything, and even use things such as IP tables (firewall rules).

- Development and Testing

- Usually developers and testers needs access to the handful of Linux distributions, and they need to reinstall those from scratch very often. With OpenVZ, they can have it all on one box, without any need to reboot, with native performance, and a new VE can be created in just a minute. Cloning a VE is also very simple: you just need to copy VE area and the configuration file.

- Educational

- Each student and his dog can have a VE. One can play with different Linux distributions. A new VE can be (re)created in a minute.